Previous

Custom training scripts

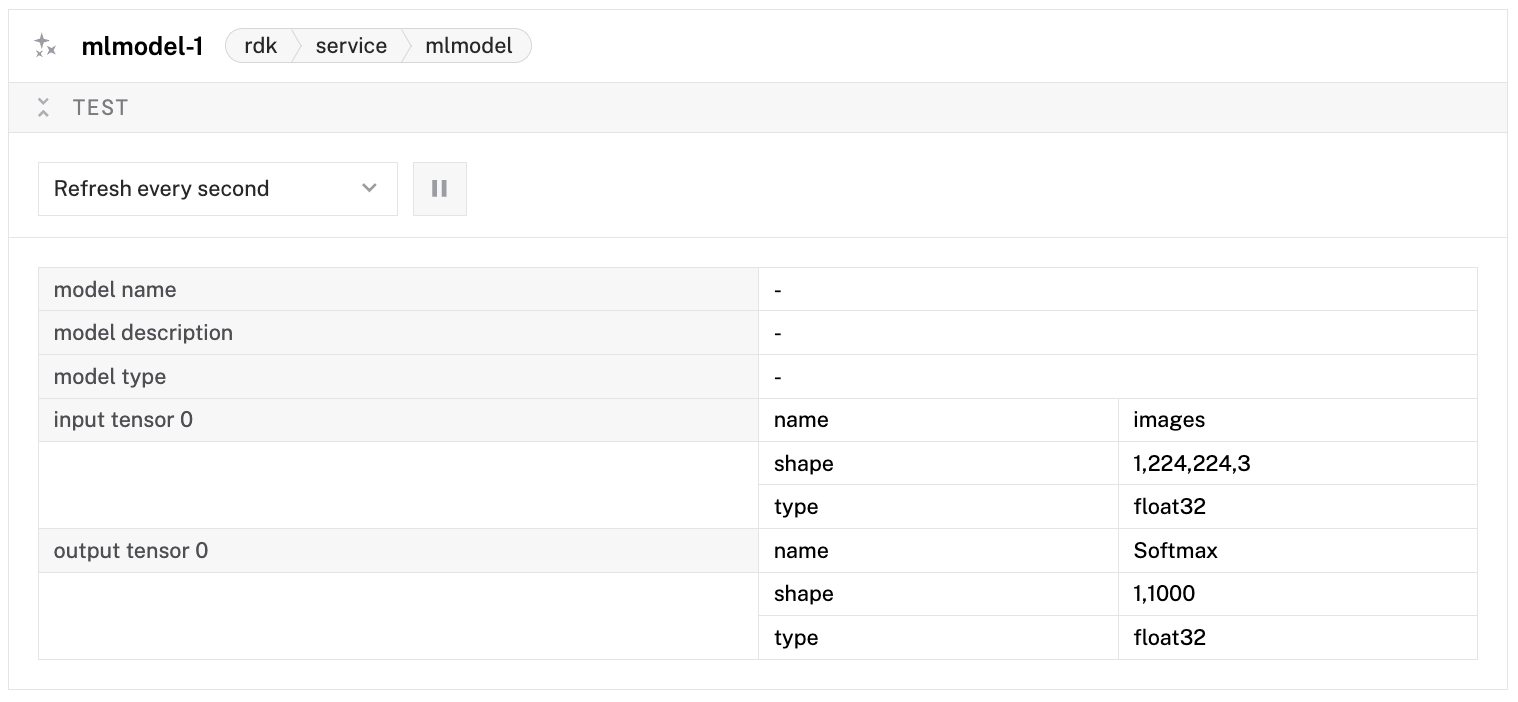

You have a trained machine learning model and a camera, and you want your machine to understand what it sees. This how-to configures both an ML model service and a vision service so that downstream how-to guides –Detect Objects, Classify Images, Track Objects –have a working vision pipeline to build on.

Most robotics platforms handle ML inference as a monolithic block: one configuration entry that loads a model and runs it against a camera. Viam splits this into two services for a reason.

The ML model service handles the mechanics of model loading: reading the file, allocating memory, preparing the inference runtime. The vision service handles the semantics: what does “run a detection” mean, how do you map model outputs to bounding boxes, how do you associate results with camera frames.

This separation means:

Viam currently supports the following frameworks:

| Model Framework | ML Model Service | Hardware Support | Description |

|---|---|---|---|

| TensorFlow Lite | tflite_cpu | linux/amd64, linux/arm64, darwin/arm64, darwin/amd64 | Quantized version of TensorFlow that has reduced compatibility for models but supports more hardware. Uploaded models must adhere to the model requirements. |

| ONNX | onnx-cpu, triton | Nvidia GPU, linux/amd64, linux/arm64, darwin/arm64 | Universal format that is not optimized for hardware-specific inference but runs on a wide variety of machines. |

| TensorFlow | tensorflow-cpu, triton | Nvidia GPU, linux/amd64, linux/arm64, darwin/arm64 | A full framework designed for more production-ready systems. |

| PyTorch | torch-cpu, triton | Nvidia GPU, linux/arm64, darwin/arm64 | A full framework that was built primarily for research. Because of this, it is much faster to do iterative development with (the model doesn’t have to be predefined) but it is not as “production ready” as TensorFlow. It is the most common framework for open-source models because it is the go-to framework for ML researchers. |

For some ML model services, like the Triton ML model service for Jetson boards, you can configure the service to use either the available CPU or a dedicated GPU.

Entry-level devices such as the Raspberry Pi 4 can run small ML models, such as TensorFlow Lite (TFLite). More powerful hardware, including the Jetson Xavier or Raspberry Pi 5 with an AI HAT+, can process larger models, including TensorFlow and ONNX. If your hardware does not support the model you want to run, see Cloud inference.

The ML model service loads a model file and exposes it for inference. The most common implementation is tflite_cpu, which runs TensorFlow Lite models on the CPU. Other implementations support ONNX, TensorFlow SavedModel, and PyTorch.

When you deploy a model from the Viam registry, the model files are downloaded to your machine and referenced with the ${packages.model-name} variable. You can also point directly to a local file path if you have a model file on disk.

The tflite_cpu implementation runs entirely on the CPU, so it works on any hardware –no GPU required. For Raspberry Pi, Jetson Nano, and other edge machines, this is the standard approach. Performance depends on the model size: a MobileNet-based detector typically runs at 5-10 frames per second on a Raspberry Pi 4.

The vision service connects an ML model to a camera and provides high-level APIs for detection and classification. The mlmodel implementation takes detections or classifications from the ML model and returns them as structured data: bounding boxes with labels and confidence scores for detections, or label-confidence pairs for classifications.

The vision service is what your code interacts with. You never call the ML model service directly from application code. This means switching from a detection model to a classification model requires only a configuration change to the ML model service, not a rewrite of your application.

The vision service also handles the image capture pipeline. When you call GetDetectionsFromCamera, the vision service captures a frame from the specified camera, converts it to the format expected by the model, runs inference, and returns structured results. You do not need to manage image capture and model input formatting yourself.

ML models output numeric class IDs, not human-readable names. A detection model might output class ID 3, which means nothing to you. The label file maps these IDs to names: class 3 might be “dog”, class 7 might be “car”.

The label file is a plain text file with one label per line. The line number (zero-indexed) corresponds to the class ID. For example:

background

person

bicycle

car

motorcycle

In this file, class ID 0 is “background”, class ID 1 is “person”, and so on. When you configure the ML model service with a label_path, detections and classifications will use these human-readable names instead of numeric IDs.

If you do not provide a label file, detections will report numeric class IDs as their labels.

The service works with models from various sources:

ML models must be designed in particular shapes to work with the mlmodel classification or detection models of Viam’s vision service.

See ML Model Design to design a modular ML model service with models that work with vision.

my-ml-model, and click Add component

again to confirm.After creating the service, configure it to point to your model file.

If you deployed a model from the Viam registry:

{

"name": "my-ml-model",

"api": "rdk:service:mlmodel",

"model": "tflite_cpu",

"attributes": {

"model_path": "${packages.my-model}/model.tflite",

"label_path": "${packages.my-model}/labels.txt"

}

}

The ${packages.my-model} variable resolves to the directory where the registry package was downloaded. Replace my-model with the name of your deployed model package.

If you have a local model file:

{

"name": "my-ml-model",

"api": "rdk:service:mlmodel",

"model": "tflite_cpu",

"attributes": {

"model_path": "/path/to/your/model.tflite",

"label_path": "/path/to/your/labels.txt"

}

}

The model_path is the path to the model file on the machine where viam-server is running. The label_path is optional but recommended –it maps numeric class IDs to human-readable names.

When you add a model to the ML model service in the app interface, it automatically uses the latest version. In the ML model service panel, you can change the version in the version dropdown. Save your config to use your specified version of the ML model.

my-detector, and click Add component

again to confirm.Link the vision service to your ML model service:

{

"name": "my-detector",

"api": "rdk:service:vision",

"model": "mlmodel",

"attributes": {

"mlmodel_name": "my-ml-model"

}

}

The mlmodel_name must match the name you gave your ML model service in step 1. This is how the vision service knows which model to use for inference.

For the full list of mlmodel vision service configuration attributes, see Configure an mlmodel Detector or Classifier.

Click Save in the upper right. viam-server reloads automatically and initializes both services. You do not need to restart anything.

You can verify which capabilities your vision service supports by calling GetProperties. This returns whether the service supports detections, classifications, and 3D object point clouds.

my-detector).If the camera feed appears but no detections are shown, point the camera at an object your model was trained to recognize. If you are using a general-purpose COCO model, try pointing the camera at a person, a cup, or a keyboard.

Your machine configuration should now include both services. The relevant sections look like this:

{

"services": [

{

"name": "my-ml-model",

"api": "rdk:service:mlmodel",

"model": "tflite_cpu",

"attributes": {

"model_path": "${packages.my-model}/model.tflite",

"label_path": "${packages.my-model}/labels.txt"

}

},

{

"name": "my-detector",

"api": "rdk:service:vision",

"model": "mlmodel",

"attributes": {

"mlmodel_name": "my-ml-model"

}

}

]

}

The ML model service must be listed before or alongside the vision service. The vision service depends on the ML model service, and viam-server resolves dependencies automatically regardless of order in the configuration file.

The mlmodel_name attribute is the only required field. The remaining attributes let you fine-tune how the vision service interprets model output:

| Attribute | Type | Required | Description |

|---|---|---|---|

mlmodel_name | string | Required | Name of the ML model service to use. |

default_minimum_confidence | float | Optional | Minimum confidence threshold (0.0-1.0) for all labels. Detections and classifications below this are filtered out. |

label_confidences | object | Optional | Per-label confidence thresholds. Keys are label names, values are minimum confidence (0.0-1.0). Overrides default_minimum_confidence for specific labels. |

label_path | string | Optional | Path to a labels file. Overrides the label file specified in the ML model service. |

camera_name | string | Optional | Default camera to use when calling methods that take a camera name. |

remap_input_names | object | Optional | Map model input tensor names to the names the vision service expects. Use when the model’s input names do not match the standard convention. |

remap_output_names | object | Optional | Map model output tensor names to the names the vision service expects (for example, location, category, score). Use when the model’s output names differ from the expected names. |

xmin_ymin_xmax_ymax_order | array of int | Optional | Specifies the order of bounding box coordinates in the model’s output tensor as indices [xmin, ymin, xmax, ymax]. Use when the model outputs coordinates in a non-standard order. |

input_image_mean_value | array of float | Optional | Per-channel mean values for input image normalization (for example, [127.5, 127.5, 127.5]). Subtracted from each pixel before inference. |

input_image_std_dev | array of float | Optional | Per-channel standard deviation values for input normalization (for example, [127.5, 127.5, 127.5]). Each pixel is divided by this after mean subtraction. |

input_image_bgr | bool | Optional | Set to true if the model expects BGR channel order instead of RGB. Default: false. |

For most pre-trained models from the Viam registry, only mlmodel_name is needed. The advanced attributes are useful when working with custom models that have non-standard input/output formats.

You can also use the ML model service directly to run raw tensor inference, without a vision service. This is useful for non-vision ML models or when you need to interpret the raw model output yourself.

The following code passes an image to an ML model service, and uses the Infer method to make inferences:

import asyncio

import numpy as np

from PIL import Image

from viam.components.camera import Camera

from viam.media.utils.pil import viam_to_pil_image

from viam.robot.client import RobotClient

from viam.services.mlmodel import MLModelClient

# Configuration constants – replace with your actual values

API_KEY = "" # API key, find or create in your organization settings

API_KEY_ID = "" # API key ID, find or create in your organization settings

MACHINE_ADDRESS = "" # the address of the machine you want to capture images from

ML_MODEL_NAME = "" # the name of the ML model you want to use

CAMERA_NAME = "" # the name of the camera you want to capture images from

async def connect_machine() -> RobotClient:

"""Establish a connection to the robot using the robot address."""

machine_opts = RobotClient.Options.with_api_key(

api_key=API_KEY,

api_key_id=API_KEY_ID

)

return await RobotClient.at_address(MACHINE_ADDRESS, machine_opts)

async def main() -> int:

async with await connect_machine() as machine:

camera = Camera.from_robot(machine, CAMERA_NAME)

ml_model = MLModelClient.from_robot(machine, ML_MODEL_NAME)

# Get ML model metadata to understand input requirements

metadata = await ml_model.metadata()

# Capture image

images, _ = await camera.get_images()

image_frame = images[0]

# Convert ViamImage to PIL Image first

pil_image = viam_to_pil_image(image_frame)

# Convert PIL Image to numpy array

image_array = np.array(pil_image)

# Get expected input shape from metadata

expected_shape = list(metadata.input_info[0].shape)

expected_dtype = metadata.input_info[0].data_type

expected_name = metadata.input_info[0].name

if not expected_shape:

print("No input info found for 'image'")

return 1

if len(expected_shape) == 4 and expected_shape[0] == 1 and expected_shape[3] == 3:

expected_height = expected_shape[1]

expected_width = expected_shape[2]

# Resize to expected dimensions

if image_array.shape[:2] != (expected_height, expected_width):

pil_image_resized = pil_image.resize((expected_width, expected_height))

image_array = np.array(pil_image_resized)

else:

print(f"Unexpected input shape format.")

return 1

# Add batch dimension and ensure correct shape

image_data = np.expand_dims(image_array, axis=0)

# Ensure the data type matches expected type

if expected_dtype == "uint8":

image_data = image_data.astype(np.uint8)

elif expected_dtype == "float32":

# Convert to float32 and normalize to [0, 1] range

image_data = image_data.astype(np.float32) / 255.0

else:

# Default to float32 with normalization

image_data = image_data.astype(np.float32) / 255.0

# Create the input tensors dictionary

input_tensors = {

expected_name: image_data

}

output_tensors = await ml_model.infer(input_tensors)

print(f"Output tensors:")

for key, value in output_tensors.items():

print(f"{key}: shape={value.shape}, dtype={value.dtype}")

return 0

if __name__ == "__main__":

asyncio.run(main())

The following code passes an image to an ML model service, and uses the Infer method to make inferences:

package main

import (

"context"

"fmt"

"image"

"gorgonia.org/tensor"

"go.viam.com/rdk/logging"

"go.viam.com/rdk/ml"

"go.viam.com/rdk/robot/client"

"go.viam.com/rdk/components/camera"

"go.viam.com/rdk/services/mlmodel"

"go.viam.com/utils/rpc"

)

func main() {

apiKey := ""

apiKeyID := ""

machineAddress := ""

mlModelName := ""

cameraName := ""

logger := logging.NewDebugLogger("client")

ctx := context.Background()

machine, err := client.New(

context.Background(),

machineAddress,

logger,

client.WithDialOptions(rpc.WithEntityCredentials(

apiKeyID,

rpc.Credentials{

Type: rpc.CredentialsTypeAPIKey,

Payload: apiKey,

})),

)

if err != nil {

logger.Fatal(err)

}

// Capture image from camera

cam, err := camera.FromProvider(machine, cameraName)

if err != nil {

logger.Fatal(err)

}

images, _, err := cam.Images(ctx, nil, nil)

if err != nil {

logger.Fatal(err)

}

img, err := images[0].Image(ctx)

if err != nil {

logger.Fatal(err)

}

// Get ML model metadata to understand input requirements

mlModel, err := mlmodel.FromProvider(machine, mlModelName)

if err != nil {

logger.Fatal(err)

}

metadata, err := mlModel.Metadata(ctx)

if err != nil {

logger.Fatal(err)

}

// Get expected input shape and type from metadata

var expectedShape []int

var expectedDtype tensor.Dtype

var expectedName string

if len(metadata.Inputs) > 0 {

inputInfo := metadata.Inputs[0]

expectedShape = inputInfo.Shape

expectedName = inputInfo.Name

// Convert data type string to tensor.Dtype

switch inputInfo.DataType {

case "uint8":

expectedDtype = tensor.Uint8

case "float32":

expectedDtype = tensor.Float32

default:

expectedDtype = tensor.Float32 // Default to float32

}

} else {

logger.Fatal("No input info found in model metadata")

}

// Resize image to expected dimensions

bounds := img.Bounds()

width := bounds.Dx()

height := bounds.Dy()

// Extract expected dimensions

if len(expectedShape) != 4 || expectedShape[0] != 1 || expectedShape[3] != 3 {

logger.Fatal("Unexpected input shape format")

}

expectedHeight := expectedShape[1]

expectedWidth := expectedShape[2]

// Create a new image with the expected dimensions

resizedImg := image.NewRGBA(image.Rect(0, 0, expectedWidth, expectedHeight))

// Simple nearest neighbor resize

for y := 0; y < expectedHeight; y++ {

for x := 0; x < expectedWidth; x++ {

srcX := x * width / expectedWidth

srcY := y * height / expectedHeight

resizedImg.Set(x, y, img.At(srcX, srcY))

}

}

// Convert image to tensor data

tensorData := make([]float32, 1*expectedHeight*expectedWidth*3)

idx := 0

for y := 0; y < expectedHeight; y++ {

for x := 0; x < expectedWidth; x++ {

r, g, b, _ := resizedImg.At(x, y).RGBA()

// Convert from 16-bit to 8-bit and normalize to [0, 1] for float32

if expectedDtype == tensor.Float32 {

tensorData[idx] = float32(r>>8) / 255.0 // R

tensorData[idx+1] = float32(g>>8) / 255.0 // G

tensorData[idx+2] = float32(b>>8) / 255.0 // B

} else {

// For uint8, we need to create a uint8 slice

logger.Fatal("uint8 tensor creation not implemented in this example")

}

idx += 3

}

}

// Create input tensor

var inputTensor tensor.Tensor

if expectedDtype == tensor.Float32 {

inputTensor = tensor.New(

tensor.WithShape(1, expectedHeight, expectedWidth, 3),

tensor.WithBacking(tensorData),

tensor.Of(tensor.Float32),

)

} else {

logger.Fatal("Only float32 tensors are supported in this example")

}

// Convert tensor.Tensor to *tensor.Dense for ml.Tensors

denseTensor, ok := inputTensor.(*tensor.Dense)

if !ok {

logger.Fatal("Failed to convert inputTensor to *tensor.Dense")

}

inputTensors := ml.Tensors{

expectedName: denseTensor,

}

outputTensors, err := mlModel.Infer(ctx, inputTensors)

if err != nil {

logger.Fatal(err)

}

fmt.Printf("Output tensors: %v\n", outputTensors)

err = machine.Close(ctx)

if err != nil {

logger.Fatal(err)

}

}

For configuration information, click on the model name:

You can use these publicly available machine learning models:

Verify the full pipeline is working by running a quick detection from code.

Install the SDK if you haven’t already:

pip install viam-sdk

Save this as vision_test.py:

import asyncio

from viam.robot.client import RobotClient

from viam.services.vision import VisionClient

async def main():

opts = RobotClient.Options.with_api_key(

api_key="YOUR-API-KEY",

api_key_id="YOUR-API-KEY-ID"

)

robot = await RobotClient.at_address("YOUR-MACHINE-ADDRESS", opts)

detector = VisionClient.from_robot(robot, "my-detector")

detections = await detector.get_detections_from_camera("my-camera")

print(f"Found {len(detections)} detections:")

for d in detections:

print(f" {d.class_name}: {d.confidence:.2f}")

await robot.close()

if __name__ == "__main__":

asyncio.run(main())

Run it:

python vision_test.py

mkdir vision-test && cd vision-test

go mod init vision-test

go get go.viam.com/rdk

Save this as main.go:

package main

import (

"context"

"fmt"

"go.viam.com/rdk/logging"

"go.viam.com/rdk/robot/client"

"go.viam.com/rdk/services/vision"

"go.viam.com/utils/rpc"

)

func main() {

ctx := context.Background()

logger := logging.NewLogger("vision-test")

machine, err := client.New(ctx, "YOUR-MACHINE-ADDRESS", logger,

client.WithDialOptions(rpc.WithEntityCredentials(

"YOUR-API-KEY-ID",

rpc.Credentials{

Type: rpc.CredentialsTypeAPIKey,

Payload: "YOUR-API-KEY",

})),

)

if err != nil {

logger.Fatal(err)

}

defer machine.Close(ctx)

detector, err := vision.FromProvider(machine, "my-detector")

if err != nil {

logger.Fatal(err)

}

detections, err := detector.DetectionsFromCamera(ctx, "my-camera", nil)

if err != nil {

logger.Fatal(err)

}

fmt.Printf("Found %d detections:\n", len(detections))

for _, d := range detections {

fmt.Printf(" %s: %.2f\n", d.Label(), d.Score())

}

}

Run it:

go run main.go

You should see a list of detected objects with their confidence scores. If the list is empty, point the camera at objects your model recognizes.

Replace the placeholder values in both examples:

To confirm the full pipeline is working end-to-end:

If both tests pass, your vision pipeline is configured correctly and ready for use by downstream how-to guides.

Cloud inference enables you to run machine learning models in the Viam cloud, instead of on a local machine. Cloud inference provides more computing power than edge devices, enabling you to run more computationally-intensive models or achieve faster inference times.

You can run cloud inference using any TensorFlow and TensorFlow Lite model in the Viam registry, including unlisted models owned by or shared with you.

To run cloud inference, you must pass the following:

You can obtain the binary data ID from the DATA tab and the organization ID by running the CLI command viam org list.

You can find the model information on the MODELS tab.

viam infer --binary-data-id <binary-data-id> --model-name <model-name> --model-org-id <org-id-that-owns-model> --model-version "2025-04-14T16-38-25" --org-id <org-id-that-executes-inference>

Inference Response:

Output Tensors:

Tensor Name: num_detections

Shape: [1]

Values: [1.0000]

Tensor Name: classes

Shape: [32 1]

Values: [...]

Tensor Name: boxes

Shape: [32 1 4]

Values: [...]

Tensor Name: confidence

Shape: [32 1]

Values: [...]

Annotations:

Bounding Box Format: [x_min, y_min, x_max, y_max]

No annotations.

The command returns a list of detected classes or bounding boxes depending on the output of the ML model you specified, as well as a list of confidence values for those classes or boxes.

The bounding box output uses proportional coordinates between 0 and 1, with the origin (0, 0) in the top left of the image and (1, 1) in the bottom right.

For more information, see viam infer.

Was this page helpful?

Glad to hear it! If you have any other feedback please let us know:

We're sorry about that. To help us improve, please tell us what we can do better:

Thank you!